AI Operator Issue #5 — The AI Stack That Actually Works

AI Operator

Issue #5: The AI Stack That Actually Works

*The tools solo founders are quietly using to run their agent stack — what works, what breaks, and what the smartest operators are building right now.*

Is your agent stack a house of cards? One bad API call, one massive, unexpected bill, and the whole thing comes crashing down. The problem isn't the AI; it's the hype-driven tools you've been told to use.

The Hype is a Trap

You're drowning in tools. Every week there's a new framework on Hacker News promising to be the "React for AI." LangChain, LlamaIndex, AutoGPT clones — they all demo well. They make it look easy.

But when you try to build a real product, the abstractions start to leak. You spend more time debugging the framework's opaque internals than you do building your actual agent logic. You can't figure out why a chain is so slow, or why it’s hallucinating a function call. You’re fighting the tool, not solving the customer's problem.

The real operators ignore this noise. They know that in production, control, observability, and simplicity are the only things that matter. They're not cloning repos; they're building boring, reliable systems.

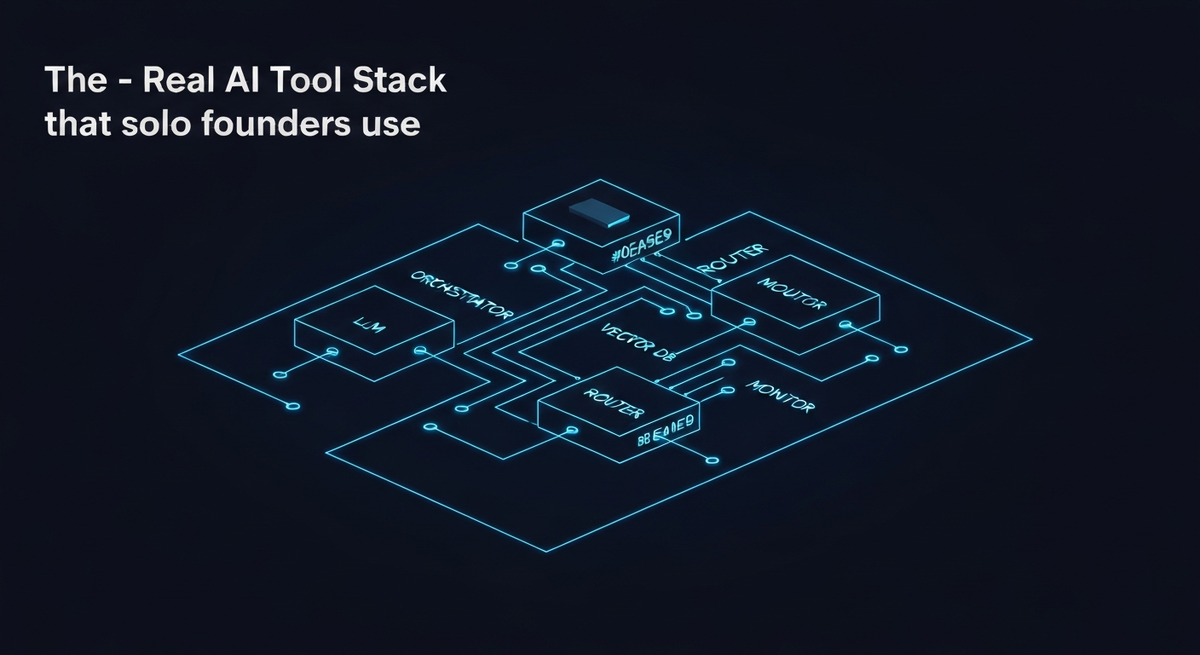

The Unbundled, Production-Ready Stack

Here’s what the smartest solo founders are actually using. It’s not sexy, but it works. It’s a move from all-in-one frameworks to a simple, unbundled stack where you control every piece.

1. LLM Provider: Go Direct Stop wrapping your API calls. Call OpenAI, Anthropic, or Google’s APIs directly. Use their Python/JS client libraries and nothing more.

- Why it works:** You get full control over parameters, timeouts, and retry logic. You can handle specific API errors gracefully. When a new model feature like JSON mode or tool use drops, you can use it immediately without waiting for a third-party library to update.

- What breaks:** Frameworks that abstract the API call. They add latency and a layer of complexity that is impossible to debug when things go wrong under load.

2. Orchestration: Lightweight & Explicit Your agent's logic is a state machine. Don't hide it in a "Chain." Write it out explicitly.

- Why it works:** A simple Python script with clear functions like `step_1_classify_intent()`, `step_2_fetch_data()`, `step_3_generate_response()` is infinitely more debuggable than a monolithic chain. For more complex, multi-step workflows, a task queue like **Celery** or **Dramatiq** is perfect. It makes your agent's "thinking" process explicit, durable, and easy to monitor. You know exactly which step failed and why.

- What breaks:** Relying on a framework to manage your agent’s flow. It feels fast at first, but it’s a black box. You sacrifice control for a convenience you don't actually need.

3. Vector DB: Keep it Simple You need to store embeddings, not launch a rocket.

- Why it works:** If you're already using Postgres, just use **pgvector**. It's a simple extension that is surprisingly powerful and lives right next to your application data. No extra infrastructure to manage. If you need a managed solution, **Pinecone** is solid, but be mindful of the cost. For many solo projects, pgvector is more than enough.

- What breaks:** Over-engineering this layer. You probably don't need a multi-billion vector, low-latency database for your first 1,000 users. Start simple and scale when you have the revenue to justify it.

4. Monitoring & Cost Control: The Non-Negotiable Layer You can't optimize what you can't see.

- Why it works:** Services like **Helicone** or **Portkey** act as a proxy for your LLM calls. They give you a dashboard with every request, its latency, token count, and cost, with zero code changes. This isn't a "nice-to-have"; it's your command center. It’s how you spot a bug that’s costing you $100/day before it bankrupts you.

- What breaks:** Flying blind. If you don't know your cost-per-task or your P99 latency, you're not running a business; you're running a science experiment with your own money.

The Cost Layer: Model Routing is the New Core Skill

The single biggest lever you have for profitability is routing tasks to the right model. Using GPT-4 or Claude Opus for everything is like using a sledgehammer to crack a nut.

The operator’s approach is to build a simple router.

1. A cheap, fast model (e.g., `claude-3-haiku`) runs first to classify the user's intent. Is this a simple summarization, a data lookup, or a complex, multi-step reasoning task? 2. Based on the classification, the router sends the task to the appropriate model.

Let's use real numbers. A complex reasoning task costs $0.03 on Claude 3 Sonnet. A simple classification task on Claude 3 Haiku costs $0.0005.

If you can route just 50% of your requests to the cheaper model, you haven't just cut your costs—you've fundamentally changed your unit economics. This skill, designing a multi-model system, is what separates the operators who build profitable businesses from the founders who burn through their credits in a month.

The Operator Mindset

Stop thinking like you're building a web app. You are building a distributed system of systems. Your "product" is an assembly line of API calls, database lookups, and logical branches.

Reliability is more important than new features. A dumber agent that works 99.9% of the time is infinitely more valuable than a genius agent that fails unpredictably 10% of the time.

Your job as the operator is not just to write the prompts. It's to design the entire system for resilience, observability, and cost-effectiveness. It's to be the factory foreman, not just the guy who designed the cool new robot arm.

This is how you build something that lasts.

If this way of thinking resonates with you—if you want to move from building fragile demos to robust, production-grade AI systems—I’ve codified this entire approach.

It's called The AI-First OS Blueprint. It’s not just code snippets; it's a complete system design, infrastructure-as-code templates, and the operational principles to build a reliable, scalable, and cost-effective agent stack from day one.

[Get the AI-First OS Blueprint here.](https://ai-operators.lemonsqueezy.com/checkout/buy/8732d4ac-5d44-4202-b563-668480f1e11c)